In this post I’m going to describe how to build a simple Tesla Tracker that runs in AWS (see my Github). It’s some Python code that uses an AWS Lambda to gather data from your car, and store it in a DynamoDB database table. Because it’s fully serverless (of course there are servers under there somewhere, but as far as you’re concerned, you don’t need to think about them), it’s amazingly cheap to run. For this application, Lambda costs me zero (given the relatively small number of Lambda invocations I’m making here). The DynamoDB table costs me about 59 cents/month (choose the lowest value you can for “provisioned read and write capacity units” to keep the cost down), and AWS Parameter Store costs me 5 cents per month. So you can see how ridiculously cheap it is to run a serverless application like this in AWS.

In a prior post, I described my first attempt to build a simple means of gathering telemetry data from my Tesla using Tesla’s owner API. I briefly had some fun watching my AWS DynamoDB table fill up with readings showing the location of my car, the temperature, battery level, etc. However, after a while Tesla locked me out of the API, probably because I was creating too many tokens and I wasn’t storing and reusing them properly (lesson learned). Worse, Tesla uses the same API for your mobile app, which is also your key to the car, so that was locked out too!

It turns out that Tesla has now changed the authentication approach on their API to use OAuth2, so I needed to update my approach, anyway.

As before, I’m using:

- AWS Lambda as a place to run my code, which wakes up periodically and gathers data from my car, and

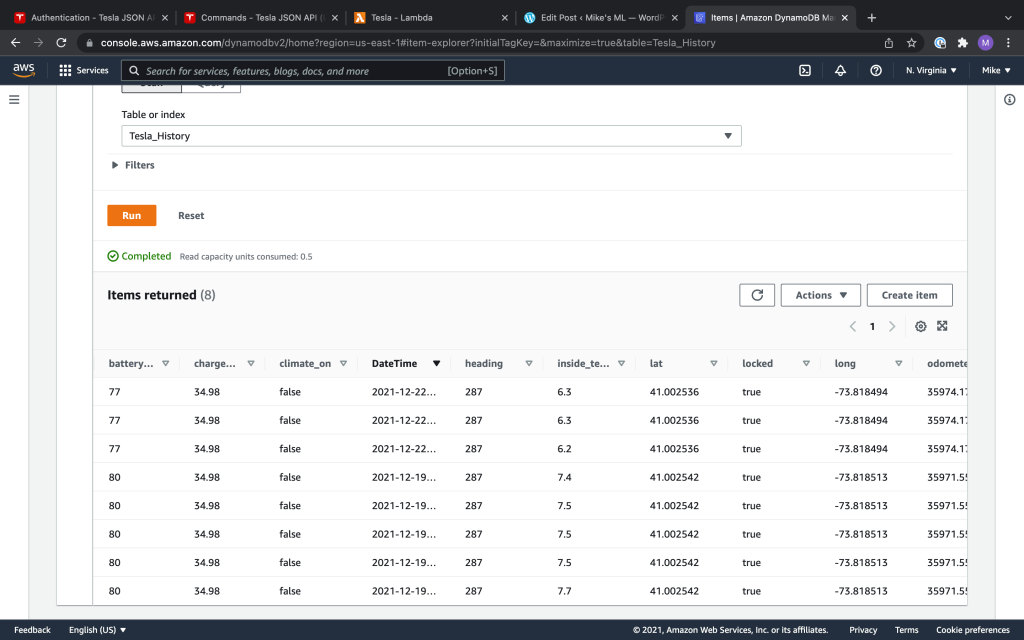

- DynamoDB, Amazon’s managed No-SQL database, to store the data that’s retrieved.

I made a couple of key changes to deal with the Tesla’s new authentication approach, and also make sure I was storing and reusing tokens properly:

- Use the teslapy API. This is a Python library for calling the Tesla Owner API. The volunteer who is maintaining it gives you some good instructions on his Github about how to manage authentication with the Tesla API, and persist the API tokens. Follow the instructions on Tim’s Github regarding how to get your first token. Then you can paste it into AWS Parameter Store (see below) and teslapy should manage renewing the token for you after that.

- Use AWS System Manager Parameter Store as a place to persist my tokens. The teslapy Github shows you how to store your tokens with SQL Lite. But when you’re using Lambda there isn’t really a good place to store the SQL Lite DB between Lambda runs, unless you want to do something like put it in an S3 bucket. But since we’re using AWS, let’s use the native AWS tool for this, not SQL Lite. AWS Parameter Store is tailor-made for this kind of thing. It’s not difficult to use, and you can encrypt your stored credentials. You can look at how I did this in Github.

In my prior post, I included some details on how to zip up any Python libraries that your code depends on (in this case, teslapy) and upload them to Lambda. This can be a little bit tricky (I got it wrong the first few tries) but this blogger gives you exact bash commands to use, so if you follow those instructions carefully it’s not so bad.

To use AWS System Manager Parameter Store, just go into the AWS Console and create a new parameter. I called mine ‘My_Tesla_Parameters’. So that’s where I’m going to store my API tokens. There are actually a few parameters that teslapy wants to persist. So rather then separating them all out and storing them separately, I just stored them as one big JSON. There are 2 kinds of parameters in AWS SMPS–standard and advanced. It turns out that shoving all of my parameters into a single JSON put me over the size limit for standard, so I had to move up to advanced, which costs me 5 cents per month. My lambda code has functions that get and store the parameter JSON in AWS SMPS using the AWS boto API. That way, I get the Tesla API token before making my API calls, and put it back before terminating the Lambda (in case the teslapy API decided to update my token based on whether it found that it was about to expire). Another option is AWS Secrets Manager. For my application, though, Secrets Manager looks like overkill because it seems to be most helpful if you’re rotating credentials. That’s not really relevant here–I’m just storing my Tesla tokens, and teslapy is going to help me refresh them every 45 days or so when they expire.

You need to give your Lambda an IAM role that lets it access the various AWS services that it needs, namely, DynamoDB and Parameter Store. When you create a Lambda, AWS gives you the option of creating a new role. That’s a default role that the Lambda will assume, but by default it won’t have access to anything except Lambda. So you can just let AWS create that role for you, but then you need to go into IAM in the AWS Console and find the role and edit it. Add two ‘inline’ policies to allow access to DynamoDB and System Manager Parameter Store. It’s good practice to give those policies the least privileges necessary (i.e., just write access to DynamoDB, and just Put and Get privileges for System Manager Parameter Store).

You can use AWS Eventbridge to wake up the Lambda every so often. Below, you can see a few data points in my DynamoDB table, as well as a map showing some of the points captured during a recent trip, and stored in my database.

My plan is to let this run for a while and possibly try to use the data for Machine Learning, or some kind of dashboard application. Things look good after a few days. I haven’t locked myself out of my car yet!