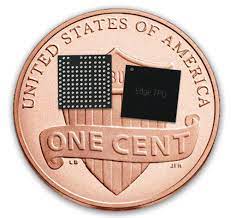

The Google Coral is a 5x5mm ASIC for ML

I recently bought a new toy which I think really represents the future of AI. It’s the Coral chip, from Google. Google is a leader in Machine Learning with their Tensorflow platform, and now they are pushing down to the “edge” of machine learning with their Coral chip. This is important because if we can buy low-power, cost-effective chips that can speed up machine learning inferencing, we can run ML at a reasonable price on very low-powered devices such as IoT devices that can’t run ML in the cloud (e.g., because they don’t have the connectivity, or because they need to make quick, autonomous decisions using ML).

Google calls the Coral chip a TPU, for Tensor Processing Unit, because it’s a custom chip that’s designed to natively process tensors, which is how data is represented in neural networks. Because it’s designed to do exactly this, it’s way faster than just using a standard CPU when it comes to doing the math required to do neural network ‘inferencing’, i.e., making predictions using an ML model.

Buying and installing the Google Edge TPU

I ordered the Coral USB accelerator from Mouser. If you like machine learning, it’s a lot fun for just $60. Of course the main idea of the Coral is that hardware makers will build it into their products, but with the USB accelerator, you can easily add it to your Pi. Here, you can see my Pi with the USB accelerator attached by USB.

To install the Edge TPU on your Raspberry Pi, you follow these instructions. They recommend using the ‘standard’ runtime, but they also provide a “maximum operating frequency” runtime, although they warn that if you use this, your USB accelerator can get very hot, to the point where it can burn you! And you need to accept their warning before they even let you install the runtime software that makes the Coral run that fast.

Well, that sounded like too much fun to ignore, so I tried both the standard and the high-speed modes.

It’s fast!

The Coral chip only works with Tensorflow Lite (TFLITE), which is Google’s version of Tensorflow designed for processing at the edge. In a prior post, I used my library of photos taken by my Raspberry Pi of birds at my bird feeder. I sorted the photos into folders named for the relevant species of bird, and fed it into the TFLITE model maker to build a TFLITE model. Then, I took that model and compiled it for the Coral Edge TPU according to Google’s instructions. You can see my Python notebook for training and compiling my model in Github. I got most of this code from examples provided by Google, but if you’re trying to train your own model and run it on a Coral chip, hopefully this will be useful for you. Once you have the model (and the labels in a .txt file), you can use the sample Python code that Google provides to run a TFLITE image recognition model on your Pi. I made a couple minor modifications to this, but nothing much.

Below is a video of a bird being recognized by the model without the Coral chip. The important number to look at is the time to run the TFLITE model, in milliseconds. You can see it takes around 140ms each time we take a grab from the Pi’s video stream and feed it into the model and run it.

Now let’s check out a Goldfinch being recognized by the TFLITE model running on the Edge TPU. Wow! Each call of the model executes in under 7ms! That’s 1/20th of the time it takes to run on the Raspberry Pi’s (low powered) ARM processor.

Just for fun I tried out the “maximum operating frequency” runtime, which Google warned us about. It gets the execution time of my ML model down to around 5ms or so. So that’s around a 25% reduction in execution time vs. the standard Edge TPU runtime, but compared with the time to run the model on the Pi’s CPU (140ms), that 2ms savings is probably not worth the extra power consumption in most cases. If I get a chance I’ll measure the temperature of the TPU after running for a while on the “max” runtime. Maybe I can cook an egg with it.