AWS Rekognition (sic) is a cloud service that provides a number of features for image recognition. You can train it with your own custom images, or you can use the canned training that’s already been done for you, if that’s sufficient.

I decided to try to use the tool both ways: by training it with some custom images and by using the out-of-the-box, pre-trained capabilities of Rekognition.

Rekognition Custom Labels

You use AWS Rekognition Custom Labels if you have your own images, which make sense for your application, but which aren’t handled by the standard Rekognition service. For example, you have good widgets coming off your production line, and damaged ones. You could train Rekognition to know the difference between the two and move the damaged ones off to the side.

I decided to train Rekognition to be able to recognize various birds. I’m thinking of pointing a camera at my bird feeder and sending the output to Rekognition. Caltech has a really nice library of labeled bird pictures of 200 different varieties of birds. They have color images and some outlines of the bird’s shapes. I just used the color images. First you go into the Recognition UI in the AWS Console. Click on Use Custom Labels. You need to create a data set. Recognition creates a bucket for you, and you can dump the images in there. You can drag and drop them, but if you have a lot of images you’re better off using the AWS CLI, with a command like this (which copies a folder containing all its images to an S3 bucket):

aws s3 cp --recursive 200.Common_Yellowthroat/ s3://mikes-bird-bucket/200.Common_YellowthroatI only copied some of the folders to S3 because you pay for Rekognition training time and you may not want to load it up with all 18,000 images until you have a feel for what that’s going to cost you. And anyway, a lot of those birds aren’t native to my area.

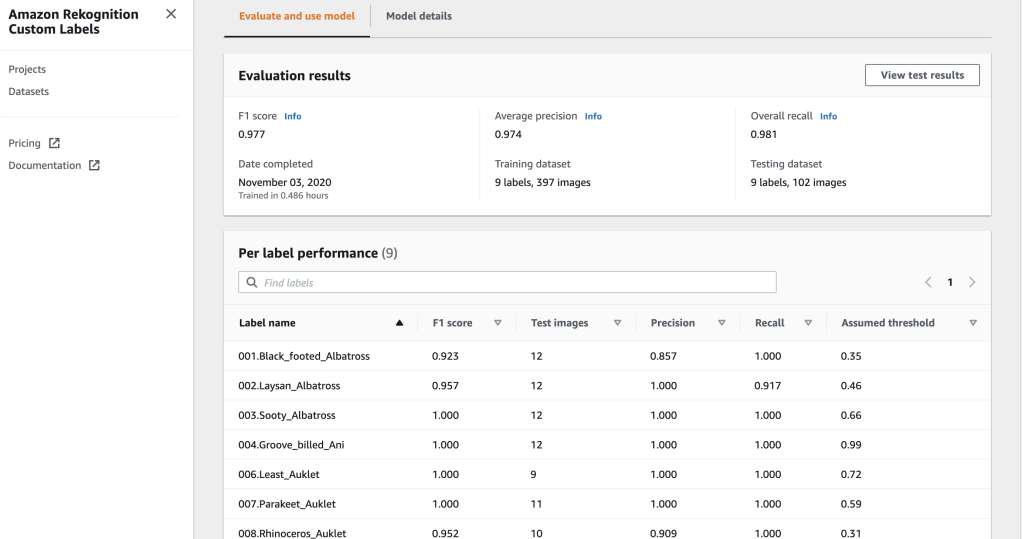

Having created a data set, you click on Project, and create a new Rekognition project. You tell Rekognition where your images are. Conveniently, the Caltech bird images were nicely organized in folders that were named with the correct label for each image, i.e., the type of bird. So Recognition was able to understand the correct type of bird in the pictures with no further work by me. I started training, and waited. And waited. It took a while. Maybe an hour. And that was with only about 500 images. Rekognition is, I believe, optimizing the hyperparameters of the deep learning model it’s using. So that takes a while because it means it’s training the model multiple times to find the best hyperparameters. But the results are amazing. My model ended up with an F1 score of .977. The best possible score is 1.0, so the model is extremely accurate at classifying the nine types of birds I trained it to recognize. And if you look at these images, you’ll see that the differences between some of the varieties of birds is pretty subtle, so it’s impressive that Rekognition can tell the difference.

Recognizing New Images

Having trained our model, how do we use it? First, you need to start the model. In the Rekognition console they provide an AWS CLI command that does that. It takes about 5 minutes to fire it up. Then you can use the AWS CLI to provide your model with an image to classify, but to create a real application you’ll want to write a little code. I found some example code that AWS provides in the SDK for Rekognition, and managed to modify it and get it to work for Rekognition Custom Labels (although the API call I’m using here isn’t mentioned in the documentation, which might not be up-do-date. I just guessed it and it worked). Here’s my code:

https://github.com/mesadowski/AWSRekognition/blob/main/Rekognition_Custom_Labels.py

To make this work I had to install boto (the AWS Python SDK) and figure out how to get my AWS API credentials and load them into a file and put them into my .boto folder.

Using Standard Rekognition

I managed to get an AWS account with some free credits, so I was able to train my custom model with 9 bird varieties for free, although it did cost a couple dollars in credits. But if I wanted to train my model with all 200 bird varieties, that might cost a lot more. And the other thing that worried me was the cost of inference (running the model vs. new images). You get a few hours as part of the AWS free tier, but after that it costs $4.00 an hour. But if you’re running it 24×7 that adds up. It looks like AWS is firing up a VM to run my model (its hard to tell because it doesn’t show up as an EC2 instance in the console). But I’m guessing that’s what’s happening because of the delay in starting up my model, and due to the cost. So I’m wondering if instead of using Custom Labels, I can just use standard out-of-the-box Rekognition. Pricing for standard image recognition (i.e., no custom labels) is really cheap. It’s priced per API call, and you can get 5000 calls per month free. Even when you exhaust that, it costs only 0.1 cents per image. So for my personal application here, I’d like to use standard Rekognition if possible. It turns out it’s quite good. It can’t distinguish between 10 different kinds of sparrows, but it can tell you that a bird is a sparrow, and not, say, a blackbird.

The Python code to call the REST API for standard Rekognition is similar to the code for Rekognition Custom Labels. The main difference is that you haven’t trained a custom model, and don’t need to start it up ahead of time, or refer to it in your API call:

https://github.com/mesadowski/AWSRekognition/blob/main/Rekognition_Standard.py

For the above picture, which was in the test data set, the output is:

Detected labels for 010.Red_winged_Blackbird/Red_Winged_Blackbird_0011_5845.jpg

Label Animal

Confidence 99.97032165527344

Label Bird

Confidence 99.97032165527344

Label Beak

Confidence 94.90032196044922

Label Agelaius

Confidence 93.90814971923828

Label Blackbird

Confidence 93.90814971923828

So Rekognition is 99.97% sure it’s an animal, and it’s 93.9% sure it’s an Agelaius (red-winged blackbird), which is correct.

Summary

AWS Rekognition Custom Labels does a phenomenal job of letting you train a custom image recognition model, with no programming. You’ll need to do some programming to use the model, but you don’t need much, if any, deep learning knowledge to get great results. But it’s expensive because it’s not really (as far as I can tell) a true serverless deployment. So you pay for every hour you need it running. By contrast, the standard Rekognition service is truly serverless. You pay only based on the number of API calls you make. And Rekognition has been pretrained to do a lot of useful things out of the box. I was able to get it to recognize blackbirds, and sparrows, for example. And it can also do other things, like put bounding boxes around portions of an image to show you where, for example, cars or other objects are located.